Shopify A/B Testing: How To Increase Conversion Rate

Master Shopify A/B testing methods, metrics, and tools to lift conversions and revenue fast.

Last updated: 2026-01-09

Takeaways

Iterative A/B testing is what brands use as a quantitative method for tracking how they can improve their online store business performance.

Throughout the A/B test, you track key page performance metrics, such as conversion rates, click-through rates, or bounce rates, to decide which version works best towards reaching your business goals.

Effective testing relies on gathering enough data for significance and running one clear, structured experiment at a time.

What Is Shopify A/B Testing

If you run a Shopify store, A/B testing can replace guesswork with quantifiable evidence by letting brands compare two versions of a singular element on a page and conclusively measuring the performance impacts of each version.

What is A/B Testing?

A/B Testing, or split testing, is when you create two or more variations of a single element—the control being the original, and the variable being the new version—and then randomly directing equal amounts of traffic to each version to see which version performs better.

So for example, store owners can compare two versions of a page—Version A and Version B—to see which leads to more clicks on the CTA. Half of shoppers see Version A, the other half see Version B. The resulting data shows which variation converts best, whether that’s a headline tweak or a checkout layout shift. The winning version is then applied across other pages.

Shopify makes it straightforward to experiment with specific elements. Merchants often test product image layouts, button placements, or pricing displays. Shopify data shows brands that run structured tests can see conversion rate lifts of 10% or more when iterations are applied consistently.

Why A/B Testing Boosts Ecommerce Revenue

Every small improvement in conversion rate compounds across your funnel. Adjusting elements like CTAs, page sections, or shipping messages creates ripple effects in purchase totals and repeat buyer value.

Here are some common changes that brands make and the corresponding metrics that these changes are meant to impact:

- Higher conversion rates: More visitors become paying customers

- Increased average order value: Better product presentation and offers (bundling, subscriptions) drives larger purchases

- Reduced cart abandonment: Optimized checkout flows keep customers engaged from first click to checkout.

Who Should Start A/B Testing On Shopify

Steady traffic, a clear conversion goal, and consistent tracking are required for clear and conclusive results. If you’re brand new or mid-rebuild, you might want to start with customer feedback or session replays before formal tests.

Here are a few guidelines you should meet first to start testing:

- You average several hundred sessions per week on target pages.

- Your analytics goals and revenue tracking are configured correctly.

- Your product and pricing are stable during the test window.

- Your team can run a test for at least two full weeks.

Don’t test during a major redesign or when traffic is too low to hit sample size, as A/B tests require stable conditions to generate cleaner comparisons and reliable decisions that inform future product-page builds.

In those cases, focus on qualitative research and usability studies until your brand fulfills the conditions above.

Key Metrics And Sample Size You Will Need

The goal of your test will define how you want to determine your winner. No matter the metric, however, you will want to make sure your A/B test reaches statistical significance.

Statistical significance is the mathematical measure of confidence that the difference in performance between test variations is real and not due to random chance.

In A/B testing, we usually aim for a 95% confidence level, meaning there's only a 5% probability that the observed difference occurred by chance. This threshold helps prevent false positives where you implement a "winning" variation that doesn't actually improve conversions.

Before you start running a test, you should use the stat sig equation to determine the sample size and test duration required to get a significant winner. This prevents premature and inconclusive calls.

Learn more in our detailed guide on how to calculate stat sig and what it means. See our A/B test statistical significance calculator to see how many visitors each variation needs.

Here’s a few metrics that brands often focus on when running an A/B test:

Bounce Rate

It’s the share of sessions where visitors leave without another action. If bounce drops on your variant, you improved first-impression relevance. Keep that metric secondary unless the page’s primary goal is engagement.

Conversion Rate

This is the percent of visitors who complete your defined action, be it purchase, signup, or add-to-cart. You might track add to cart or completed purchase. Use it when your test intent is a direct response.

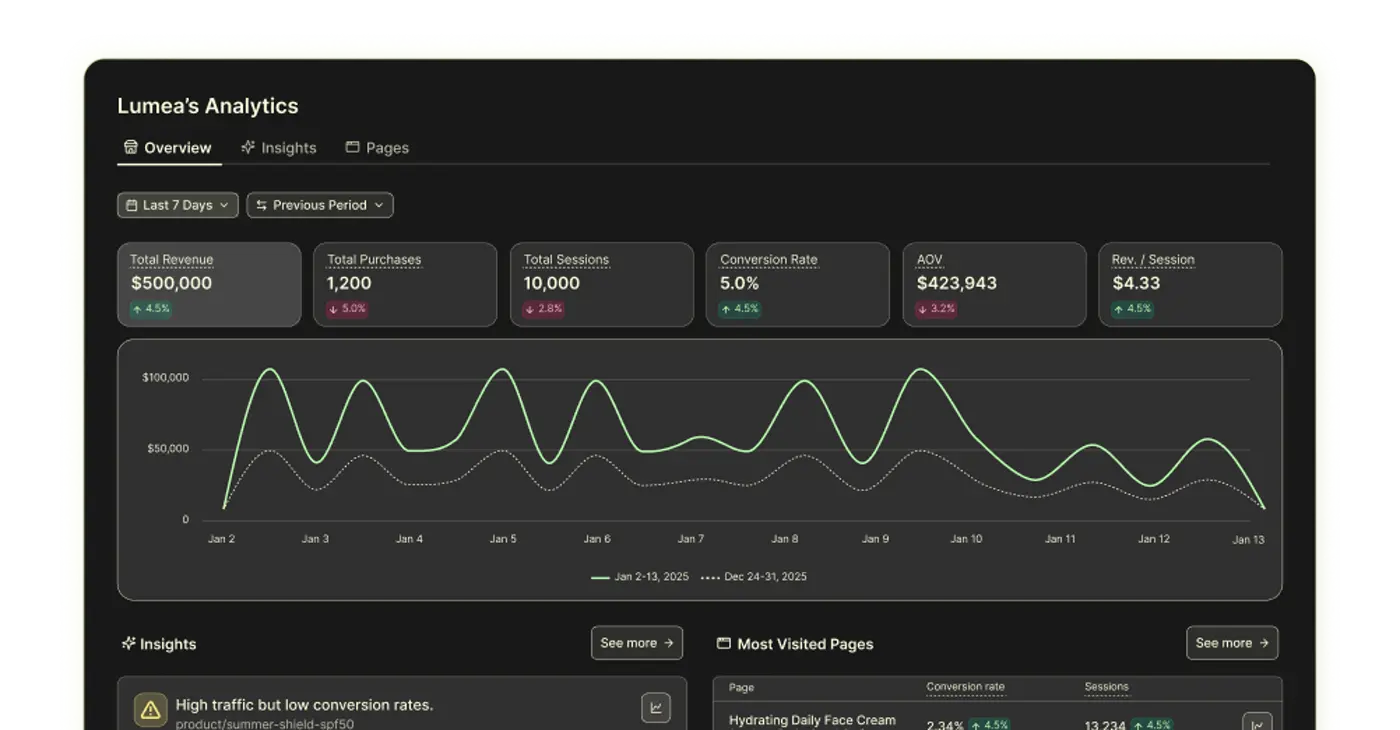

Revenue Per Session

Revenue per session reveals total earning power per visit. It’s often the most honest ecommerce metric because it bakes in both conversion and order value.

Minimum Detectable Lift

This is the smallest improvement you care to detect in your A/B test. Choose it based on impact. Remember that a smaller minimum detectable lift will require a larger sample to prove. We recommend using a significance calculator such as this one to determine sample size needs.

Test Duration

Run full-week increments through at least two business cycles. Many stores need two to four weeks, to capture weekday and weekend fluctuations (Shopify).

How To Run An A/B Test For Your Shopify Store

Below is a high-level overview of what you need to know to start running an A/B test for your landing pages.

To learn how to run an A/B test, no matter if you’re a beginner or advanced user, access the full guide here.

1. Collect Quantitative Data

Find weak points in your funnel. Look for pages with high exits or sharp drop-offs. Validate that analytics events align to your goals before you start, either in Replo Analytics or any analytics tool of your choice.

2. Gather Qualitative Insights

Survey hesitant shoppers and watch session replays. You’ll learn which objections to tackle and which elements confuse people.

3. Draft A SMART Hypothesis

Make sure your hypothesis is specific, measurable, achievable, relevant, and time-bound (or SMART for short). This increases the success rate of your experiment and lets you draw clear conclusions based on your test results.

For example, a poorly constructed hypothesis would be "making a product page more visually appealing will increase conversion.”

A better hypothesis would be “by replacing the current product images with lifestyle images, we expect to see a 10% increase in add-to-cart conversions within two weeks, because lifestyle images better demonstrate the product’s functionality and inspire customers to envision themselves wearing them."

This hypothesis clearly states the change (new lifestyle images), the expected outcome (10% increase in add-to-cart conversions), and the rationale (better product demonstration and customer inspiration).

4. Split Traffic Evenly

Randomize traffic assignments to keep your test valid. Keep device and geography mixes balanced.

5. Run Until Significance Hits

Once you start running an A/B test, avoid stopping tests prematurely, even if early results look promising. Ending a test too soon can lead to inaccurate conclusions due to small sample sizes.

Let the test run to the predetermined sample or end date.

Remember to also account for the time required to reach statistically significant results. This period will vary based on your store's traffic and the impact of the changes you're testing. Tests of high-impact elements like CTAs may conclude faster than tests of smaller changes.

Also, do not conduct several A/B tests on the same page at the same time, which can cause interaction effects that skew results. Run tests sequentially instead.

Let your test run until it has reached the predetermined sample size or time duration (usually at least 1-2 business cycles, or around 3-4 weeks). Trust the math and wait for the final numbers.

Implement the winning variation across your pages and archive outcomes for future learning.

{{get-started="/components"}}

What To A/B Test? How To Increase Conversion Rate

One of the biggest questions A/B testing tries to answer for ecommerce brands is how to increase conversion rate.

Industry standard has given us a list of best practices for Shopify pages to do exactly that, but oftentimes brands do not know if a change has led to a definite improvement of business metrics until they test it.

For most stores, there are a handful of key landing page elements that are prime targets for A/B testing.

We cover this topic in detail with our checklist of Shopify A/B tests to run on Shopify your store, but there’s a few quick items to get you started.One of the biggest questions A/B testing tries to answer for ecommerce brands is how to increase conversion rate.

Headers and Subheaders: Try out different header and subheader copy, styles, and sizes to capture visitors' attention and clearly communicate your brand value. Don’t be afraid to insert more emotion into the headlines.

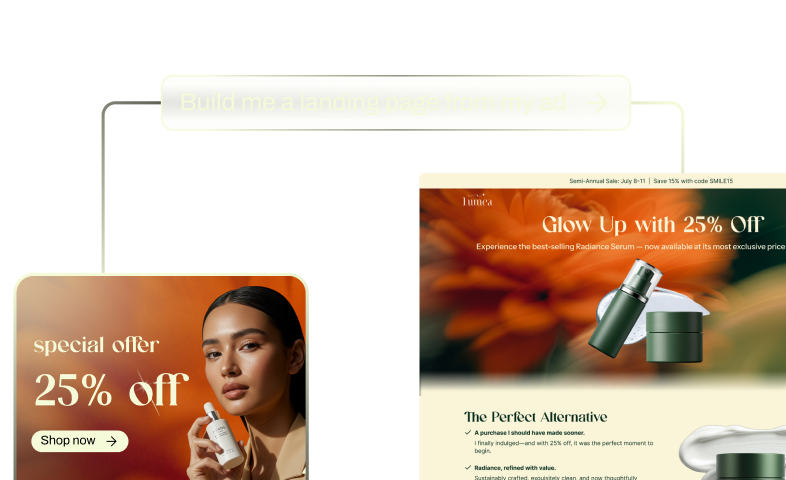

Replo Builder can help you generate new on-brand and product-targeted copy to test in a matter of seconds.

.avif)

CTA Buttons: Experiment with different text, colors, sizes, and placement of your call-to-action buttons to find the most effective combination for driving conversions.

.avif)

Images and Videos: Evaluate the impact of different product images, lifestyle photos, and videos on user engagement and conversion rates. Test variations in style and placement.

.avif)

Product Descriptions: Experiment with the length, format, and content of your product descriptions to find the optimal balance between providing necessary information and keeping visitors engaged.

.avif)

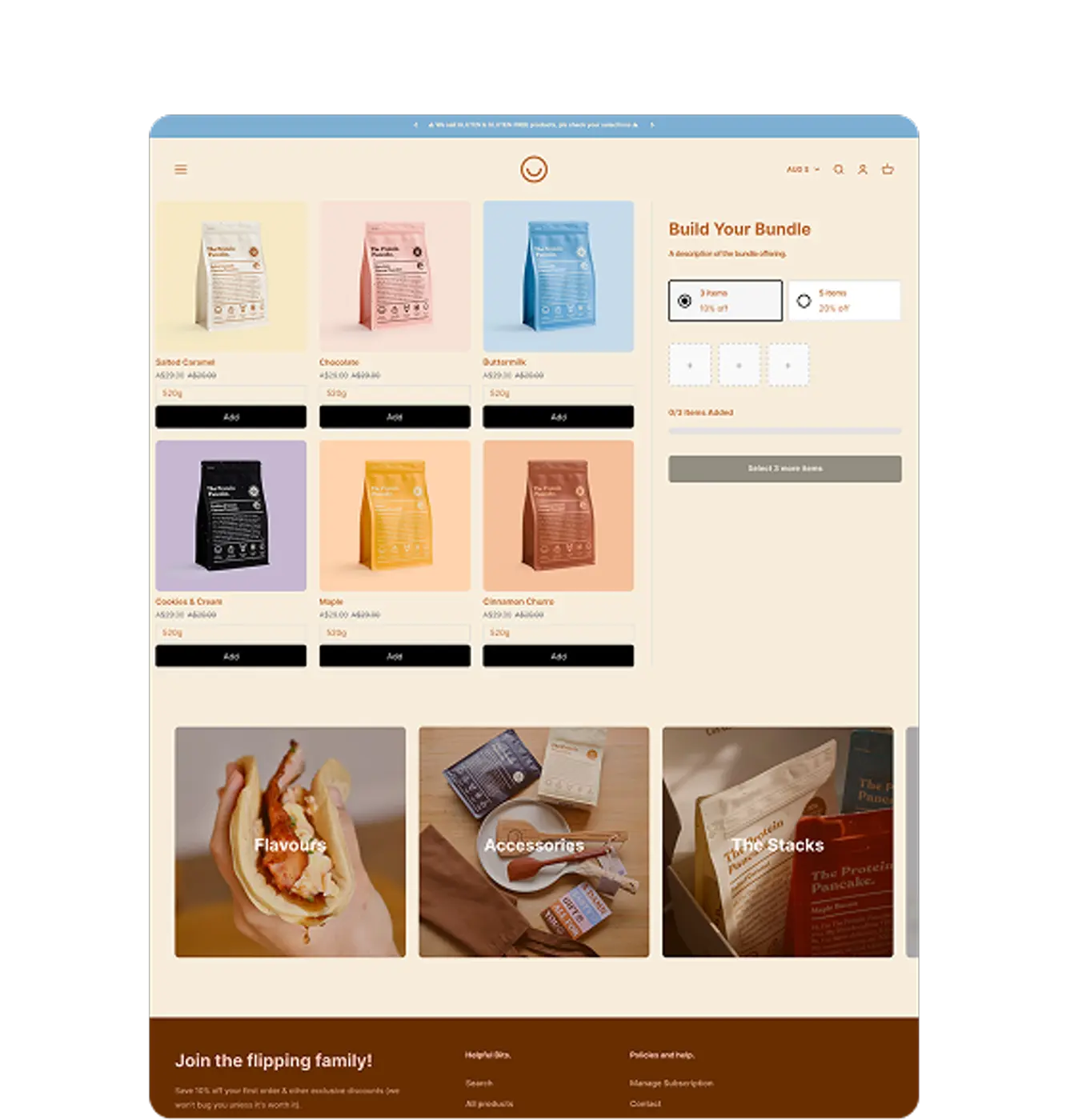

Pricing: Try out various pricing displays, such as emphasizing discounts, showing price comparisons, or bundling products, to find the most appealing presentation for your audience.

.avif)

Our Template Library comes with hundreds of ready-to-use section and product page templates, each with a different pricing display that you can customize and A/B test in your store.

Social Proof: Experiment with the placement and design of customer reviews, ratings, user-generated content, and testimonials. The key is to build credibility with shoppers to encourage conversions.

.avif)

Navigation: You want shoppers to find what they need as quickly and easily as possible. That means you need well-organized site navigation. Measure the quality of your site navigation by testing different menu structures, item labels, and categorization.

Focusing on these elements for designing high-converting landing pages will help you optimize your Shopify store conversions faster.

Advanced Testing Methods To Consider

Multivariate Testing

You test combinations of elements at once. This requires higher traffic because the number of variants grows quickly.

For example, you might test three different headlines, two images, and two call-to-action buttons—resulting in 12 possible combinations (3x2x2). Multivariate tests are useful for finding the best combination of elements on a complex page.

Funnel Testing

As brands grow more familiar with running A/B testings, they move toward multi-element and funnel-level optimization.

Below is a quick overview of the different types of advanced A/B tests we’ve seen; for a more in-depth breakdown, visit our guide on how to run A/B tests for advanced users.

Multivariate Testing

You test combinations of elements at once. This requires higher traffic because the number of variants grows quickly.

For example, you might test three different headlines, two images, and two call-to-action buttons—resulting in 12 possible combinations (3x2x2). Multivariate tests are useful for finding the best combination of elements on a complex page.

Funnel Testing

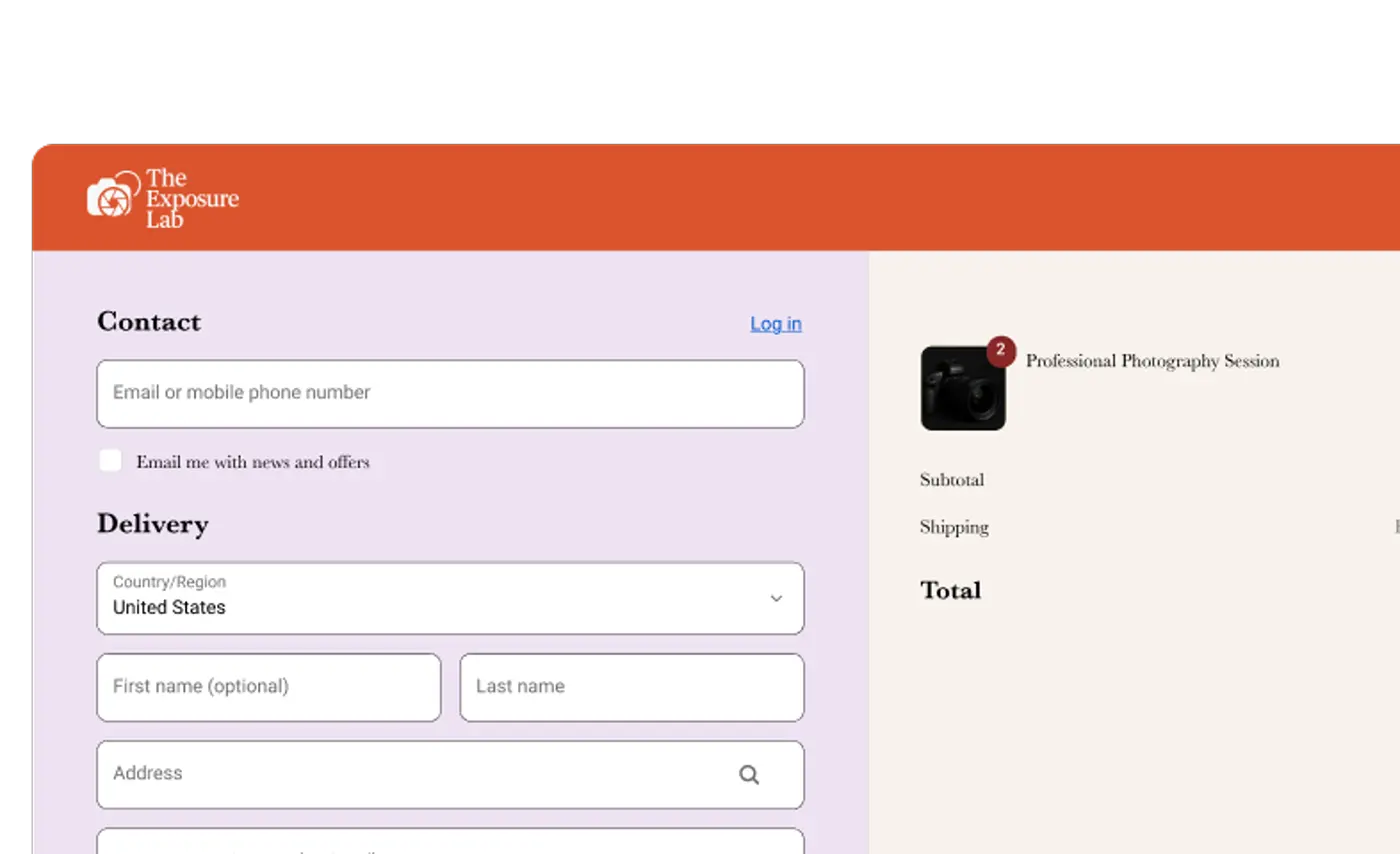

This test measures the impact across a journey, not just one page. Keep goals consistent across steps to see true downstream effects.

These allow you to test changes across an entire sequence of pages, like the paid media to landing page funnel A/B test that VaynerCommerce ran for POSSIBLE. These tests show you how changes on one page impact behavior further down the funnel.

Personalization Segments

Brands can deliver variants only to specific audiences like return buyers or paid-social visitors to create a tailored experience for shoppers. In these tests, segmentation rules must be thorough to avoid creating unclear data.

Split URL Tests (or Redirect Tests)

This experiment involves testing two entirely different web pages against each other using separate URLs. Half of the traffic is sent to the original URL (control) and the other half to a variation URL.

This is useful for testing radical redesigns or very different landing pages for the same campaign.

Common Testing Mistakes To Avoid

Testing Too Many Variables

Change one thing at a time unless you have multivariate traffic levels. Otherwise, you won’t know what caused the fluctuation in your results.

Stopping Tests Too Early

Early wins often regress. As Shopify notes, you should run full cycles to reduce the risk of calling noise a signal. Patience protects profit.

Ignoring Revenue Impact

Conversion rate isn’t everything. Track revenue per session and AOV to ensure you aren’t trading dollars for clicks.

Overlooking Mobile Users

Device experiences can differ between desktop and mobile. Validate that your variant renders perfectly on small screens before launch.

Also watch for session duplication from cookie resets or device switching during longer timeframe tests. Overruns increase risk of sample pollution, so keep a shorter A/B test window where possible.

{{get-started="/components"}}

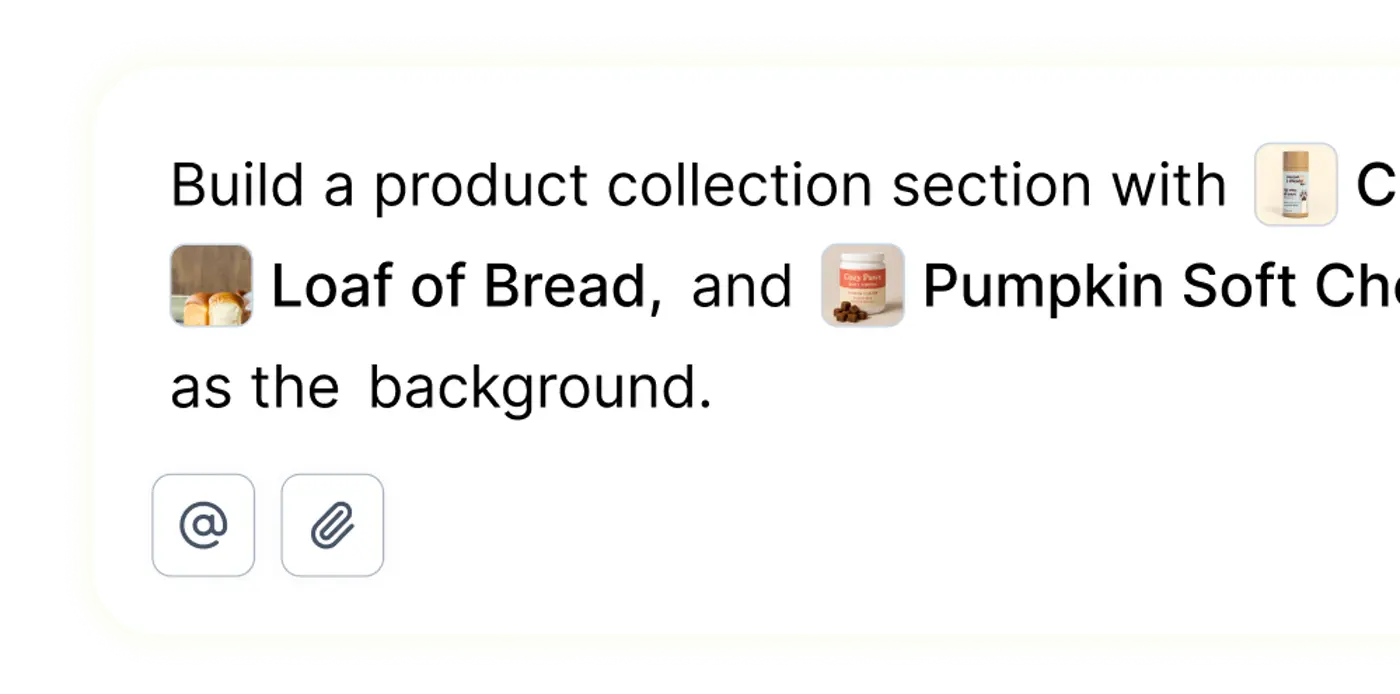

Run A/B Tests On Your Pages With Replo

Replo allows you to design, launch, and measure in one place. Build brand-customized pages with a simple prompt, without any technical skills, and start iterating with results from our A/B testing and Analytics tools.

To get started, simply give Replo chat a short prompt on what you want to build and what product you’re selling. Drop in a webpage URL to use as inspiration or as a direct reference if you have one. It’s that easy.

Sign up for free to start building, testing, and improving in minutes rather than weeks.

FAQs About Split Testing On Shopify

How long should a Shopify A/B test run?

You should aim for at least two full business cycles to catch weekday and weekend patterns. Many stores land on two to four weeks for clean results.

Can I split test Shopify checkout pages without Shopify Plus?

You can influence checkout completion by testing product and cart pages. Checkout customization is limited on basic plans, so test upstream elements.

What traffic volume is enough for ab testing retail audiences?

You need steady traffic so each variant reaches the planned sample within weeks. Several hundred visitors per week per variant is a practical floor.

Does split testing Shopify pages slow down my site speed?

Well-implemented tools add minimal overhead if configured correctly. As a result, you should QA performance and monitor core metrics during each test.

Your full-stack growth engine

From small brands to scaling teams, sell anything on the Internet with Replo.