How To A/B Test Landing Pages: A/B Testing For Beginners And Advanced Users

Everything you need to know about how to A/B test landing pages for your store.

Last updated: 2025-11-13

Takeaways

A/B testing is a method of comparing two versions of a webpage to see which one drives better results for a certain user behavior.

A/B tests are essential for iterating and optimizing your business, and for mitigating risk for your store.

Simpler A/B tests usually involve smaller scale, single-element changes with only two versions. Advanced A/B tests involve larger scale, more complex changes with more than two variants.

What Is A/B Testing

A/B testing (also called split testing) is a method of comparing two versions of a webpage or digital element to determine which performs better based on actual user behavior.

It works by splitting traffic evenly between a Version A (control) and Version B (variation), then measuring which version drives better results for your goal (conversions, revenue, signups, etc.)

The test makes sure the results are statistically significant to determine the winner with 95%+ confidence, ensuring results aren't due to random chance.

For ecommerce, you might test product page layouts, checkout processes, CTA button placement, hero images, pricing displays, or promotional messaging. The goal is to make data-driven decisions rather than relying on opinions or assumptions.

What is A/B Testing?

A/B testing compares two different versions of the same thing, usually a control version and a newly-changed variable version, to measure which version performs better for a certain metric. The winning version is announced once the test has reached statistical significance.

Why You Need A/B Testing For Your Store

A/B testing is a great option for optimizing your business, because:

- Small changes can translate into high impact shifts for your business. Even a single percentage point increase, though small, can generate a lot of impact. For example, a 1% increase in conversion rate on a $10 million ecommerce site adds $100,000 in revenue, without any additional marketing spend.

- Plus, even if the winning variant does not deliver as significant of a change, multiple small wins can compound. For example, three tests with 20%, 30%, and 30% lifts don't just add up, they multiply to lead to a 203% improvement in performance.

A/B testing is also great for mitigating risk for brands:

- Remember, A/B tests can go both ways—you can discover variants that improve performance, as well as decrease performance. While many A/B tests fail to produce winners, the plus side of experiments is that it prevents mistakes from being rolled out that might unknowingly decrease conversions.

- Testing also eliminates biased decision making and allows the data to speak for itself.

Last but not least, A/B testing is one of the best ways for discovering insights on customer behavior.

This includes figuring out which psychological tactics and content messages resonate with your target audience, and how different segments of users react differently in your store.

All these insights should be collected as part of a central knowledge base that you use to inform the best practices unique to your brand.

Statistical Significance: Why It Matters For Your A/B Tests

Before you launch anything, you will need to pay attention to statistical significance in your A/B test results. Here’s what you should know:

What is statistical significance?

Statistical significance is the mathematical measure of confidence that the difference in performance between test variations is real and not due to random chance.

In A/B testing, we usually aim for a 95% confidence level, meaning there's only a 5% probability that the observed difference occurred by chance. This threshold helps prevent false positives where you implement a "winning" variation that doesn't actually improve conversions.

Calculating statistical significance often stops at this first parameter for most use cases and tools.

If you want to put in even more failsafes to avoid false negatives (when we see no significance difference and conclude there is none), two more parameters are needed:

- the minimal difference in performance you’d like to detect, known as minimum detectable effect

- the probability of detecting that difference if such exists, known as statistical power, which is usually kept at 80%.

For the purposes of this article, and for most ecommerce uses, just focusing on a 95% confidence level and regulating the sample size of your test is enough.

Sample size plays a crucial role—the larger your sample, the less margin of error. Plus, it increases our confidence that your test results reflect true user behavior rather than random variation.

For ecommerce use cases, the formula (this is where it gets a little math-heavy!) for calculating statistical significance usually involves two-proportion z-tests or chi-square tests, which calculate a p-value—the probability of seeing your results if no real difference exists.

The calculation requires knowing sample size for each variation, conversions in each group, and desired confidence level. If your p-value (your significance level) is less than 0.05 (for 95% confidence), the difference is statistically significant.

To calculate the sample size required for your A/B test to be statistically significant, you can search up any A/B testing calculator online.

We like this sample size calculator if you want more control over your parameters. By default, you can keep the statistical power at 80% and significance level should be kept at 5%.

The minimum detectable effect (MDE) measures the percentage (absolute or relative) for which you would like to increase your current conversion rate by.

Absolute MDE applies the percentage point range directly on top of your current conversion rate for positive effects (or directly subtracts from it for negative effects). For example, if my current conversion rate is 5% and my MDE is 3%, then my absolute MDE range would be 2% to 8%.

Like its namesake, relative MDE applies the percentage point (either positively or negatively) based on your current conversion rate. So, in the same example, relative MDE would be calculated with a lower bound of 5 x (0.97) = 4.85 and an upper bound of 5 x (1.03)= 5.15. The range would be 4.85 - 5.15%, instead of 2 - 8%.

In most cases, relative MDE is used, as it reflects how most marketing and business teams think about conversion rate in relative percentage points.

However, running an A/B test with relative MDE requires a much greater sample size, as it generally produces a more minute and detailed range of effect. Compared to a wider range of effect, determining statistical significance for a narrow range of effect is much more difficult.

As a result, a greater sample size (and by extension a longer test run period) is required to get significant results.

It’s important to note that the definition of “statistically significant” varies across brands and industries. Primary factors for this include risk tolerance and business context.

For example, enterprise companies in more heavily-regulated industries like finance or healthcare often require 99% confidence. In these industries, false positives could mean millions in lost revenue or compliance issues.

On the other hand, early-stage startups or smaller brands might accept 90% confidence to make faster decisions with limited data, valuing speed over certainty.

Ecommerce brands with high average order values, such as brands selling home goods or electronic goods, need higher confidence levels in A/B test results, because each store conversion is worth more. Meanwhile, this may not hold true for brands with lower AOV, such as skincare and beauty.

On top of that, customer behavior tends to vary across different times of the year and geographies. For example, a campaign run between November and December (peak holiday shopping season) in the United States would lead to very different results compared to the same campaign run in the summer in Australia.

Traffic volume drives logistical differences during testing too. For example, brands with millions of monthly visitors can detect small 2-3% improvements with high confidence in days. Meanwhile, smaller brands might need months to reach significance or must focus on detecting only larger 20%+ changes.

The variation also stems from different statistical philosophies. For the purposes of this article, however, that topic is a little too technical to cover here.

All you need to know is that the Frequentist approach is the most commonly used and historically prevalent method, while recent industry trends have leaned towards the newer Bayesian approach.

For those interested, read this article on A/B testing philosophies to learn more.

{{get-started="/components"}}

A/B Testing Strategy For Beginners

For more beginner users, it’s important to start by doing research on Google Analytics, heatmaps, session recordings, and customer surveys to identify high-traffic pages with poor metrics.

The metrics you focus on will differ based on your performance goals (ie. clickthrough rate, average order value, bounce rate, scroll-depth, add-to-cart rate, etc.), though most brands focus on conversion rates, since these impact business topline the most.

Brands then need to develop strong hypotheses with measurable data observations to propose changes and outcomes with clear reasoning. This includes exact metrics changes and a predicted measurable impact.

During execution, beginners should run simple A/B tests with just two versions, test single elements to isolate impact, and commit to full 2-4 week test durations (averaging at about 2 full business cycles) to ensure statistical significance.

Make sure to document all learnings, including failed or non-conclusive tests.

Common mistakes to avoid include:

- testing with insufficient traffic

- running too many variations simultaneously

- stopping tests prematurely

- testing multiple elements at once per variant

Be aware that sometimes following general industry best practices is not helpful for your A/B test if you do not validate them first for your specific audience.

Every brand is a little different—you want to cater to your target audience first and foremost.

How To Run A/B Testing For Beginners

If you're new to A/B testing, follow this step-by-step guide to get started.

When You Should Start Beginner A/B Testing

You're ready when you have:

- 1,000+ weekly visitors (5,000+ monthly) to pages you'll test

- 100+ conversions per month minimum

- Google Analytics properly installed with goals configured

- 2-4 weeks of baseline data showing stable traffic patterns

- Resources to implement winning variations after testing

You're NOT ready if:

- Your website has major bugs or technical issues

- Traffic fluctuates wildly week-to-week

- You're in the middle of a major store redesign

- You have fewer than 5-10 conversions weekly

If you lack traffic: Start with email A/B testing (needs only 500-1,000 subscribers) or PPC ad testing (5,000+ impressions) to build experimentation skills while growing site traffic.

This allows you to get a taste of how A/B testing works on a smaller scale, and so you can get ideas on what you may want to test at a larger scale when your store is ready.

For example, if you see that a certain CTA button performs really well in driving clicks in your newsletters, you might note that down as a future element to A/B test in your webpages.

What You Should A/B Test

Now that we’ve covered when you should test, let’s cover what specific elements you should target.

Here’s the order of priority that we recommend:

1. High-Traffic, High-Impact Pages First

- Homepage (your highest traffic page)

- Top 3-5 product pages by traffic

- Checkout process

- Category pages

2. Specific Elements to Test (Start Simple)

Homepage:

- Hero section headline and subheadline

- Primary CTA button text and placement

- Hero image/video

- Trust signals (free shipping, guarantees)

Product Pages:

- Add to Cart button placement (above vs. below fold)

- Product image type (lifestyle vs. white background)

- Number of images shown

- Product description length and format

- Social proof placement (reviews, ratings)

- Trust badges location

Checkout:

- Guest checkout vs. required account creation

- Number of form fields

- Progress indicators

- Security badges placement

- Shipping cost visibility

Remember to always go top down on the page. Start with elements above the fold—80% of users never scroll down before bouncing.

Step-by-Step A/B Testing Guide For Beginners

Now that we’ve covered when and what you should test, let’s dive into this step by step process.

We are covering an example use case to better illustrate our points, so make sure to adapt the goals and metrics of this guide to fit your own test!

Step 1: Identify the Problem

We recommend taking a look at your Google Analytics dashboard and looking for:

- High-traffic pages with low conversion rates

- Pages with high bounce rates

- Checkout abandonment points

Example finding: Your best-selling product page gets 5,000 monthly visits but only 2% add to cart (100 conversions). Analytics shows 70% of visitors never scroll below the fold.

Step 2: Add Heatmap Tracking

Install Hotjar or Microsoft Clarity to see live user interactions with your site. Replo integrates directly with both of these tools, so you can get up to date heatmap tracking on any of your Replo pages.

Take note of:

- Where users click

- How far they scroll

- What they ignore

Step 3: Write Your Hypothesis

As mentioned previously, make sure your impact is measurable and quantifiable.

We like this template: "Based on [data], we believe that [change] will [impact] because [reasoning]."

For example: "Based on heatmap data showing 70% of mobile users never scrolling below the fold, we believe moving the Add to Cart button above the fold will increase add-to-cart rates by at least 15% because users won't have to search for the primary conversion action."

Step 4: Calculate Sample Size

Use a statistical significance calculator to calculate the sample size you will need for your test results to have non-random differences.

For example, if you have these numbers for a simple A/B test:

Current conversion rate: 2%

Relative minimum detectable effect: 15%

Confidence level: 95%

Statistical power: 80%

Then you will need nearly 35,000 visitors per variation according to our recommended calculator. At 5,000 monthly visits to your site, this test will take around 9 weeks to reach statistically significant results.

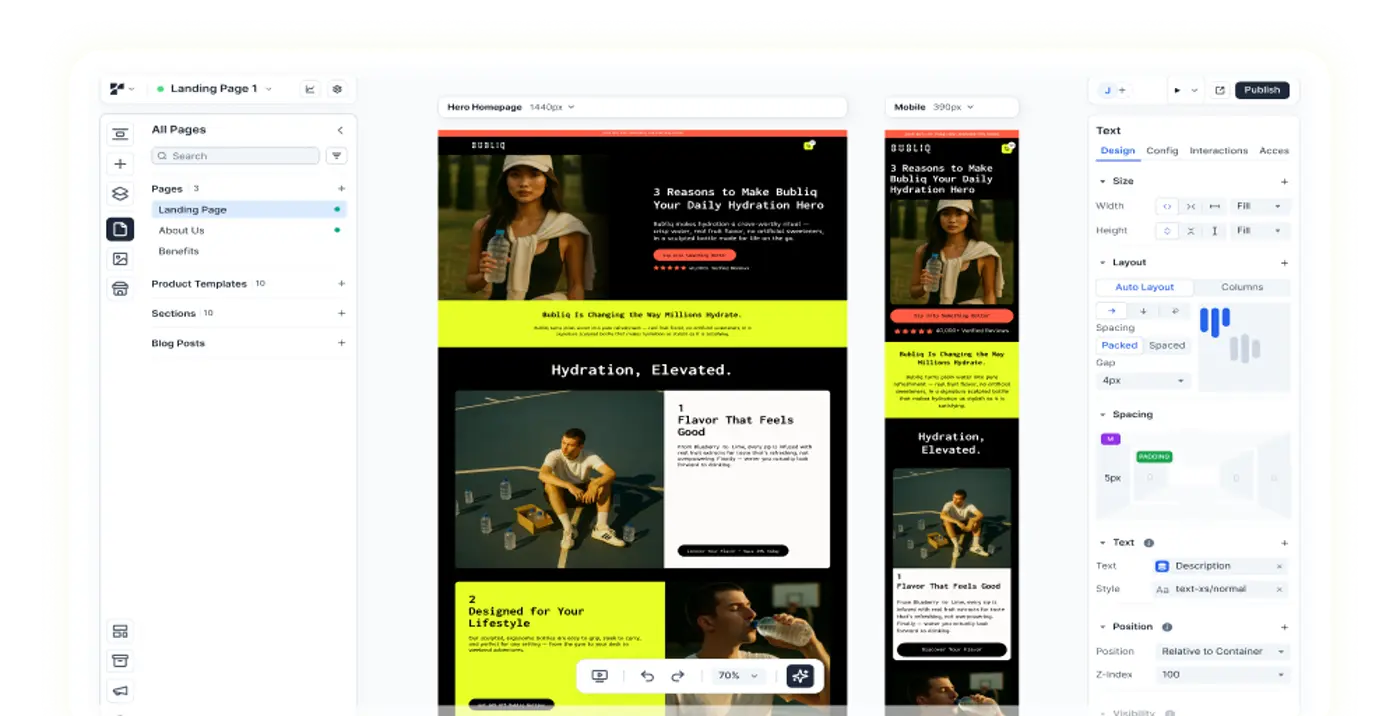

Step 5: Create Your Page Variation

In your page building platform of choice, make the necessary edits. Keep everything else the same.

For this example, that means moving the “Add to cart” button to be above the fold.

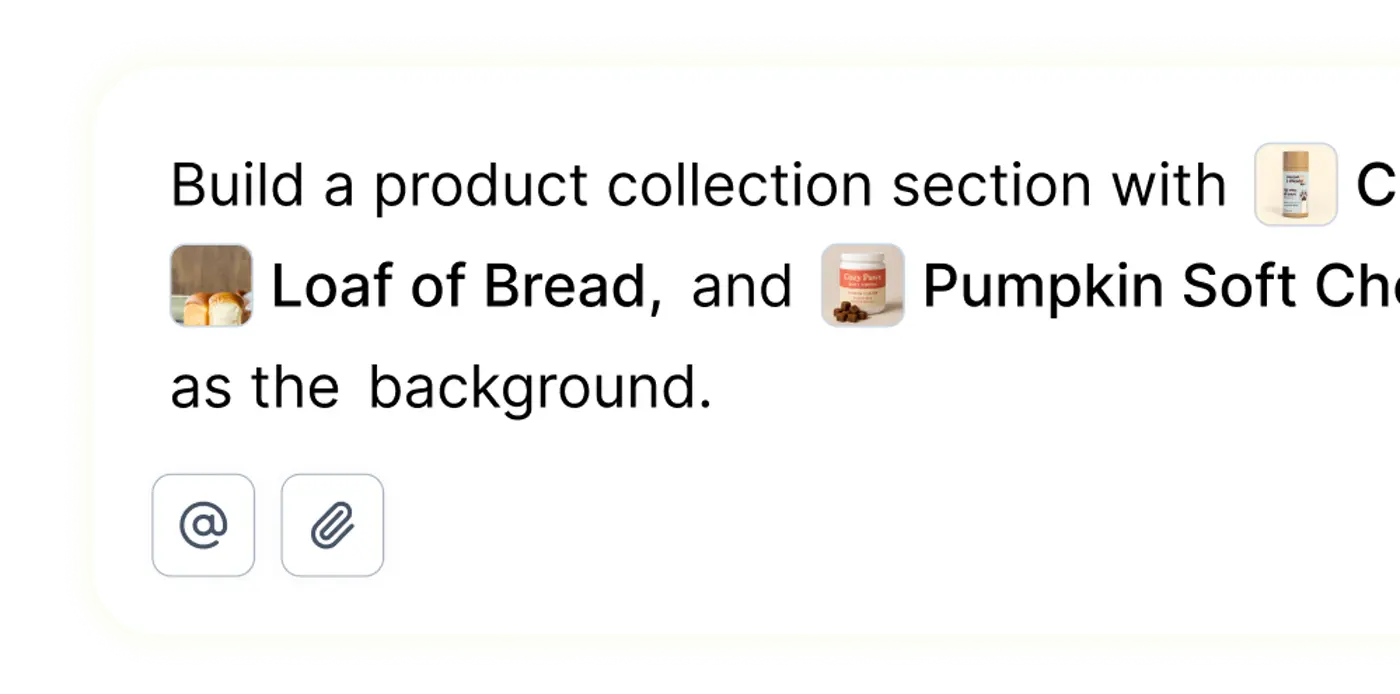

In Replo Builder, you can do this without any manual edits. Simply prompt the chat by typing in “move the add to cart button above the fold” and hitting enter, and Replo will do it for you.

For more complex edits (not needed for a beginner A/B test, but necessary for more advanced tests), you can give the Replo chat a list of edits to work through, and it can execute on all of them at once.

Once you’re done, name the page clearly so you know if this is the variant or the control.

Step 6: Set Up Your A/B Testing Platform

There’s many A/B testing platforms out there for you to choose from.

For the most streamlined A/B testing process that comes built-in with our campaign builder, use Replo. Create and edit page variations, and set up and run your experiments—all in the same app.

With Replo, you can create forever links that are reused across multiple (non-simultaneous) paid ad campaigns, such as on Meta, so you don’t need to retrain your ad algorithms every time you run a new A/B test.

Plus, you can test pages built in any platform of your choice, not just on Replo.

All you have to do is create your Replo forever link which drives traffic to the A/B test, and then copy and paste in the URL of your original page and your variant page.

Set up your tracking with a 50/50 traffic split between the control and variant. Replo enables you to set up as many variants as you would like, but for a beginner A/B test, we only want a two version split test.

In the case of this example, set your primary goal as landing page conversion rate.

Step 7: Q/A Your Experiment

Before you launch your A/B test, you’ll want to visit the experiment link across a number of browsers and devices. Test all links and buttons (especially the one that you are tracking!).

Items to Q/A include:

- Chrome, Safari, Firefox

- Desktop, mobile, tablet

- Different screen sizes

- Verify tracking fires correctly

Step 8: Launch Your Experiment

Here’s a quick rundown of things to do and not to do:

- Set test to run for minimum 4 weeks (2 full business cycles)

- Don't stop early even if you see significance

- Document start date and expected end date for recordkeeping

Step 9: Monitor The Test (But Don't Interfere)

Check your A/B test weekly for:

- Technical issues (broken pages, 404 links, tracking problems)

- Traffic distribution (this should stay close to an even 50/50 split!)

Avoid stopping the test prematurely (even if you already hit a 95% confidence level!), don't run other tests on the same page simultaneously, and don’t change anything mid-test.

Doing so will corrupt any data that you’ve already collected and make your collected results untrustworthy.

Step 10: Check Statistical Validity

Before analyzing your test results, verify that the following is true:

- Sample size reached: Did each variation get your calculated minimum visitors?

- Test duration met: Ran for at least 2 weeks spanning complete business cycles?

- Statistical significance achieved: Confidence level ≥95%?

- No external factors: No major sales, seasonal marketing campaigns, or technical issues during the test?

If any answer is NO, keep the test running or restart it.

Step 11: Analyze Your A/B Test Results

Given the set-up of our experiment, there are three possible outcomes.

- There is a clear winner between results (this is the most common)

- There is no significant difference between the control version and the test version

- There is a clear losing version

In the case that there is a winner, you will want to document the learning and apply the insight across your entire site or other similar pages where applicable. You will also want to calculate the revenue impact to record how much difference the test result made to your business.

In the case where there is no clear winner, you will keep the control, but also document the results of the test. Consider why the test failed: Was the hypothesis wrong? Was the change too subtle?

Note: An inconclusive test is not necessarily a failed test—you simply learned what doesn't work and prevented a potentially harmful change.

Finally, in the case where a clear losing version is determined, and document the change and impact. Be happy that you prevented a change that would've cost your store money!

Study this learning and try to apply to developing new future hypotheses. For example, you can test adding key product info above fold alongside the CTA button, and see if that combination will generate a positive result.

In this way, you can see how A/B test results build on each other over time.

While your key metric might be conversion rate or add-to-cart rate, other secondary metrics that you may want to look at include revenue per visitor, average order value, and bounce rate. These metrics can provide indicators for areas where you’d like to improve or test later on.

In addition, you will want to analyze audience behaviors across the two versions by segment.

For example, we can look at:

- Device type (mobile vs. desktop)

- New vs. returning visitors

- Traffic source (organic, paid, direct)

- Geographic location

You may be surprised by the data you can find there! Many times, segmenting allows you to dissect larger scale audience trends into more specific (and actionable) insights that you might have missed otherwise.

For example, a possible insight could be: "The overall test showed no clear winner, but mobile users increased conversions 45% while desktop decreased 10%. We should implement the variation for the mobile store only."

As you work through cycles of A/B testing, you will gradually develop a better understanding of what elements to test and what insights or takeaways to build on. Oftentimes, you will realize that the result of one A/B test leads to more hypotheses to experiment with.

That’s a good thing! It means that you are gradually working your way through methods to improve your store, whether it be by implementing proven positive ROI changes, or knowing to avoid proven harmful changes.

With A/B testing, you get a scientific way to make decisions about your store, instead of relying on “gut feeling” or “guesswork.”

{{get-started="/components"}}

A/B Testing Strategy For Advanced Users

Advanced users with substantial testing experience can try multivariate testing when they have sufficient traffic to test multiple element combinations at the same time. Generally, this means an average threshold of 500,000+ monthly visitors for significant results.

Personalization testing is possible at this stage, and you can segment experiences by customer type, device, traffic source, geography, and behavioral patterns to deliver targeted experiences.

Teams can develop more long term strategic roadmaps. A common example is rolling out 90-day plans across three tiers of testing:

- Foundation tests addressing known conversion blockers or weak spots

- Optimization tests improving existing elements through iteration

- Innovation tests exploring new strategies

While not necessary, teams typically run 4-8 active tests simultaneously. Dedicated team members can include CRO strategists, data analysts, UX designers, copywriters, and sometimes even developers.

How To Run A/B Testing For Advanced Users

If you're already familiar with A/B testing, follow this step-by-step guide to run more advanced experiments.

When You Should Start Advanced A/B Testing

Brands are ready to advance when they have:

- Your store gets 200,000+ monthly visitors consistently

- The brand has a consistent 10-20% win rate. This demonstrates functional and stable testing processes

- You have a current testing velocity of 1-2 tests/month, and the number of tests you run is bottlenecked by traffic, not ideas. You have a backlog of 20+ validated hypotheses. In addition, your simpler A/B tests have been yielding diminishing returns

- Your brand wants to to test complex interactions between multiple elements

- There are tests you’d like to run that require audience segment-specific optimization

- You want to run simultaneous tests without interference

While Replo A/B Testing is great for simple and accessible A/B tests that even beginners can run, you will need a different set of tools for a more advanced A/B test.

These apps come built in with more complex and powerful testing and results-analysis functions. Here are a few that we recommend looking into, each with their own specific use cases:

- Need multivariate testing → VWO, Optimizely

- Looking into server-side testing → Required for app testing, complex flows

- Require personalization → Dynamic Yield, Optimizely

In addition, if you get 500,000+ monthly visitors to your store, you may want to consider upgrading to an enterprise plan on your store building platform.

Replo offers custom enterprise plans tailored to your traffic needs, so larger businesses can build and optimize campaigns to sell better in less time.

What You Should A/B Test

Now that we’ve covered when you should test, let’s cover what you should target.

In general, you want to stop testing minor design or copy changes that don’t move the needle significantly on business topline. Instead, prioritize the following:

- Revenue-per-visitor optimization (not just conversion rate)

- Customer lifetime value impacts

- Cross-sell/upsell strategies

- Subscription/retention mechanics

- Complex UX patterns

These things drive far greater impact for your business and can alter your online customer experience as a whole.

1. Complete Experience Redesigns

Instead of making small-scale single element changes to a single page, brands will make large scale changes to entire page layouts. This test applies specifically to only one page.

Make sure to document every single change made for record keeping and comparison after the test is completed.

2. Multi-Step Funnels

This applies to A/B tests which involve more than one page. Multiple landing pages and CTAs are included between the user's first click and final destination.

Examples include:

- Ad to checkout funnel optimization

- An example would be a flow which goes from a paid Meta ad to an advertorial to a product page to a checkout page

- Onboarding sequences

- This includes sign-up forms, product tours, and pop-ups.

- Post-purchase upsell flows

- An example can be going from checkout page to upsell page with a limited time 24-hour bundle deal

3. Algorithmic Components

These are the A/B testing components that require developer assistance. The possibilities are endless here, but here’s a quick list of the most commonly seen examples:

- Product recommendation engines: When executed successfully, your product recommendation sections are a great way to upsell customers on slow-moving inventory.

- SEO page search results: Make sure your products are surfaced for the relevant queries.

- Dynamic pricing displays: Run audience targeted pricing that takes into account buyer history and preferences.

4. Personalization by Segment

Every audience segment behaves a little differently. Use A/B testing to see what makes them tick. Advanced tracking will be required to properly measure segment behavior.

Here are a few audience segments to start testing with:

- First-time vs. returning visitors

- Cart abandoners vs. browsers

- High-average order value vs. average customers

- Device type (mobile/desktop/tablet)

- Traffic source (paid/organic/direct/email)

5. Technical Infrastructure

This is one of least flashy and interesting parts of A/B testing, but its performance has been proven to significantly impact user experience, and by extension, their willingness to shop on your site.

Our top list of things to test include:

- Page load speed: This one is at the very top of our list. Slow page speeds are one of the greatest killers of traffic and buying intent.

Replo gives you the fastest loading pages on the market. In head-to-head tests (e.g., Brandefy and Nivie look-alike page builds versus original brand pages), Replo pages scored ~20 points higher in PageSpeed Insights. - Mobile app feature tests: Applicable for stores that have a pre-existing mobile app. If not, you will want to test your store performance on mobile devices versus desktop (referencing our previous section for “Personalization by Segment”).

Step-by-Step Guide For Advanced A/B Testing

Now, let’s dive into an example of what an advanced A/B test might look like.

Here we are conducting a multivariate test with three different factors.

All numbers included here are hypothetical to help illustrate our points for this example, so make sure to adapt the goals and metrics of this guide to fit your own test!

Let’s say we are a brand with the following status quo:

- 300K monthly visitors

- Current checkout conversion: 68%

- 3-page checkout flow

- Multiple elements need optimization simultaneously

Step 1: Advanced Research and Analysis

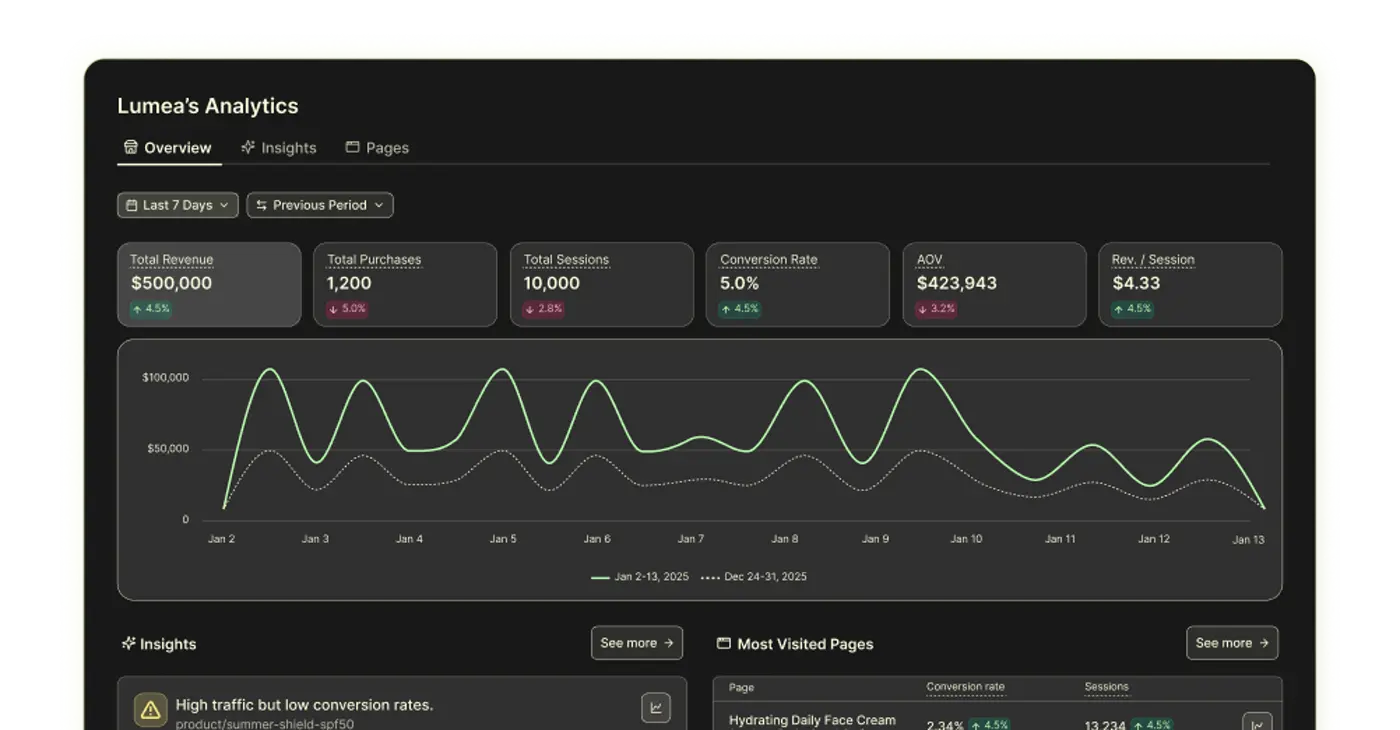

Refer to your first party or third party analytics dashboards with the goal of translating numbers into insights and trends about user behavior.

In this example experiment, we studied:

- Funnel drop-off by step, device, traffic source

- Session replay analysis of 200+ checkout abandonments

- Exit survey data (50+ responses)

- Correlation analysis between cart value and completion rate

Here’s what we found:

- 18% abandon at shipping page (mobile: 24%, desktop: 12%)

- 14% abandon at payment page

- Users with $200+ carts abandon 15% less than $50 carts

- 60% of mobile users scroll back up to review cart

Step 2: Hypothesis Development

After we’ve collected all our data and established a few user trends, we can start forming hypotheses on what we’d like to test.

A common hypothesis framework is the PXL Framework (31 questions across 6 categories) which prioritizes neutral, objective yes-or-no questions.

Other frameworks to consider include the PIE and ICE frameworks, though those formats are more susceptible to subjective biases and may be too open-ended.

We used the PXL framework to determine that the hypothesis:

- Is based on quantitative data

- Addresses a high-traffic page

- Is easy to build

- Includes a change noticeable enough to shift user behavior

This is the primary hypothesis we ended up with:

"By reducing form fields from 12 to 8 on the shipping page, adding persistent cart summary sidebar, and implementing address autocomplete, we'll increase checkout completion by 8-12% because friction analysis shows field count and cart visibility as primary abandonment drivers."

To break things down, our A/B test would be a 2x2x2 multivariate test testing the following:

- Factor A: Form fields (12 fields vs. 8 fields)

- Factor B: Cart summary (collapsible vs. persistent sidebar)

- Factor C: Address entry (manual vs. autocomplete)

- Total combinations: 8 variations

Step 3: Multivariate Test Setup

We start by calculating the requirements for our A/B test. The minimum detectable effect is self-determined by our team, given how much increase or decrease in conversion performance effect we want to see in our results.

The traffic needed per variation is calculated using this statistical significance calculator to make sure our results are not due to chance.

- Baseline: 68% conversion, 300K monthly visitors

- Minimum detectable effect: 6% relative lift (meaning the MDE is a percentage of the baseline and not a direct value added on)

- 8 variations = need 240K visitors (30K per variation)

- Timeline: 4-5 weeks

Next, you’ll want to create the experiment in your A/B testing platform of choice. With Replo, you can integrate directly with top apps, such as Optimizely and VWO.

- Create 8 variations in testing platform

- Set primary goal: Order conversion

- Set secondary goals: Revenue per visitor, time to complete

- Configure traffic allocation: Equal across all 8

- Set up segment tracking: Device, cart value, traffic source

Then, create your variant pages according to each factor that you want to test. Replo Builder allows you to implement design, content, and animation changes on a page-wide level with minimal manual editing.

Simply input the list of elements you’d like to add and remove, including references to your products and assets (all directly accessible from the editor!) as needed. Use URLs, ad creative, screenshots, or even figma exported images to prompt the chat to make the changes you need. And yes, Replo can make multiple changes at once.

For more design control, click on any element on the page and select “Edit” to directly modify an element.

- Use server-side testing for checkout (more stable than client-side)

- Implement address autocomplete via Google Places API

- Build persistent cart component

- Set up conversion tracking via backend order API

Once you’re done, start QA-ing the page! You want to test it in every use case and segment possible, to make sure all the data you collect during the test is as accurate as possible.

- Test all 8 variations across 6 browsers × 3 devices = 48 tests

- Verify tracking fires for each conversion event

- Load test to ensure no performance degradation

- Set up alerts for tracking failures as a failsafe

Step 4: Launch A/B Test

To be safe, start by deploying the test to a portion of your traffic on the first day. For this test, we chose 20%. Monitor error rates and page load times while it is deployed and make sure that you get an even distribution across variations.

If everything looks good, you can ramp the test to 100% of your traffic.

Step 5: Sequential Analysis

Unlike beginner tests, you will want to check your results weekly, instead of waiting until the very end of the experiment (sequential testing).

Things you can look out for include:

- Calculate adjusted significance thresholds (prevent peeking problem)

- Interaction effects between factors: how different factors can interact with each other to create a more positive or negative result than that factor on its own.

- Monitor segment performance differences

You can consider stopping the experiment if and only if:

- A variation shows >50% probability of being worse than control with 95% confidence

- The test’s statistical significance AND minimum sample size are met AND the full business cycle is completed

Step 6: Analyze A/B Test Results

Once the A/B test is finished, you can start looking at the results of your multivariate test and drawing conclusions.

To start, we took a look at the main effects of the three individual factors we altered:

- 8 form fields versus 12 form fields

- A persistent cart versus a collapsible cart

- Address autocomplete versus manual address form

Main Effects Analysis

Here are the numbers we got from our results:

Factor A (Form Fields):

- 8 fields: 71.2% conversion (+4.7% absolute, +6.9% relative)

- 12 fields: 68.5% conversion (baseline)

Conclusion: Field reduction drives +3.4% lift with 98% confidence

Factor B (Cart Summary):

- Persistent: 70.8% conversion (+4.1% absolute)

- Collapsible: 69.1% conversion

Conclusion: Persistent cart drives +2.5% lift with 96% confidence

Factor C (Address Autocomplete):

- Autocomplete: 70.4% conversion (+3.6% absolute)

- Manual: 68.2% conversion

Conclusion: Autocomplete drives +3.2% lift with 97% confidence

Interaction Effects Analysis

Next, we want to test for statistical interactions between factors.

Interaction effects are when the impact of one factor depends on the level of another factor. In other words, combining two changes produces a result that's different from simply adding their individual effects together.

The process of calculating interaction effects is more mathematically complex. On the bright side, this can usually be done with a more advanced A/B testing tool, such as Optimizely or VWO.

For example, here’s what our interaction effects analysis found:

- Form fields × Cart summary: No significant interaction

- Form fields × Autocomplete: Strong positive interaction (p=0.03)

- Cart summary × Autocomplete: No significant interaction

Our analysis also tells us that reduced fields with autocomplete produces an 11% lift in conversion rate, better than the additive 9.9%.

For this, we can conclude that the best performing variation includes all three factors, as each change on its own generates a statistically significant positive impact. On top of that, the interaction between reduced fields and autocomplete address fields creates additional positive effects.

To summarize:

- 8 fields + Persistent cart + Autocomplete

- Conversion: 73.8% (+8.5% absolute, +12.5% relative)

- Revenue per visitor: +14.2% (users completing faster checkout have higher AOV)

- Confidence: 99.2%

Segment Analysis

Last but certainly not least, we want to look at how different user segments interact with each factor change in different ways.

Oftentimes, looking at variant effects across segments reveal further nuance that brands might not have expected from looking at the overall results alone.

For example, here are the user segments that we might want to dive into:

Mobile (180K visitors):

- Best variation: +15.8% conversion

- Key driver: Autocomplete (18% lift alone on mobile)

Desktop (120K visitors):

- Best variation: +8.2% conversion

- Key driver: Field reduction (9% lift alone on desktop)

Cart Value Segments:

- $0-50: +6.2% conversion

- $51-100: +11.4% conversion

- $100+: +16.8% conversion

From the above, we can see that autocomplete has outsized positive effects on mobile, and that higher-value carts benefit the most from optimization.

To illustrate and record the financial impacts of our A/B test conclusions, it is also best practice to provide a calculation on revenue impact. You would share this with the team for greater visibility.

For example:

- Previous conversion: 68% = 138,720 orders

- New conversion: 76.5% = 156,060 orders

- Incremental orders: 17,340/month

- Average order value: $85

- Monthly revenue increase: $1,473,900

- Annual impact: $17.7M

Step 7: Implement A/B Test Conclusions

So, now we know we want to roll out reduced fields, autocomplete for address form fields, and sticky carts on our pages.

We’d implement this in phases, usually starting with the target segments where implementation is easiest. We’ll monitor each change as we go, making sure everything is stable before continuing to the next one.

Finally, we want to share our learnings and insights, either from our analysis on main effects, interactive effects, or segmented effects.

For example, we’d want to highlight our finding that the field count matters more for mobile users with the marketing and store development team, and implement this specific design pattern across all our mobile store pages.

Rather than making manual edits page by page across other page building platforms, you can simply drop in a page fix request into the Replo chat to implement page design changes as fast as possible.

Use URLs of your pre-existing page designs from the winning versions of your A/B test, or even screenshots of section and figma designs exported as PNG files, you can prompt to save time and generate more precise results while prompting in Replo Builder.

Check out this doc on how you can prompt Replo chat more effectively.

Not only can you implement A/B test conclusions faster across your site, you will also be able to set up and create experiment variants in less time to begin with.

With systematic, rigorous experimentation at scale, your advanced testing program should generate up to 50% cumulative conversion rate improvements over a 12 month period.

{{get-started="/components"}}

A/B Testing Tools: How To Use Replo For A/B Testing

Replo comes with our own built-in A/B testing tool, so you can create page and customer funnel variations, run split-tests, and implement winning results across your site all in the same platform.

For all beginner A/B testing users, Replo’s testing tool makes it easy to get started. You can create forever links that are reused across multiple (non-simultaneous) paid ad campaigns, such as on Meta, so you don’t need to retrain your ad algorithms every time you run a new A/B test.

Plus, we let users run experiments with a mix of pages built on any platform of your choice, not just on Replo.

Simply create your Replo forever link which drives traffic to the A/B test, and then copy and paste in the URL of your original page and your variant page.

Set up your tracking with a 50/50 traffic split between the control and variant. Replo enables you to set up as many variants as you would like, but for a beginner A/B test, we usually recommend starting with a simple two element split test.

For more advanced A/B testing purposes (such as multivariate testing), Replo natively integrates with all major A/B testing tools on the market, including but not limited to Optimizely, VWO, and Intelligems.

If you don’t see the app that you want to use available for direct integration, no worries. Replo enables integrating with virtually any app through our API.

Read our docs for more information on how to set up integrations to any testing app of your choice.

No matter the testing platform that you choose to run your experiments, Replo makes A/B testing faster and more effective for growing conversion for your brand.

How? By speeding up the flywheel for going from creating a variant page and making edits, to running an A/B test on your preferred platform, to implementing winning A/B test changes site-wide.

Replo builds custom conversion flows for your store, so you have time to focus on the actual decisions—iterating and optimizing your store—to drive more value for your business.

Build as many pages as you need for any test you run, faster than ever.

Don’t believe us? Check out our tutorial on 4 ways you can go from first click to live store in 5 minutes.

FAQs About A/B Testing

How much traffic do I need to start A/B testing?

You need a minimum of 1,000 weekly visitors (5,000+ monthly) and 100+ conversions per month If you have less traffic, start with email A/B testing (500-1,000 subscribers) or PPC ad testing (5,000+ impressions) to build experimentation skills while growing site traffic.

How long should I run an A/B test?

Run tests for a minimum of 2-4 weeks (2 complete business cycles), even if you reach 95% confidence earlier. Don't stop prematurely—statistical significance can fluctuate, and you need to account for weekly variations in traffic patterns and customer behavior.

Why should brands invest in A/B testing?

A/B testing transforms small optimizations into significant revenue gains—a 1% conversion increase on a $10M site adds $100K without additional ad spend, and multiple wins compound.

Beyond revenue, testing prevents costly mistakes by catching changes that would decrease conversions, eliminates gut-feel decision making with data, and reveals customer insights specific to your audience that generic best practices can't provide.

Can I stop my test early if it reaches statistical significance?

No. Stopping early inflates your false positive rate. Even at 95% confidence, you must wait for your predetermined sample size and test duration (2-4 weeks minimum). Premature stopping corrupts your data and makes results untrustworthy.

Your full-stack growth engine

From small brands to scaling teams, sell anything on the Internet with Replo.